We still rely on hospital staff to tell whether a person – who is under medical treatment and cannot communicate – is in pain. But nurses might soon be able to go on a lunch break without worrying that their mystery coma patient will succumb while they’re munching away.

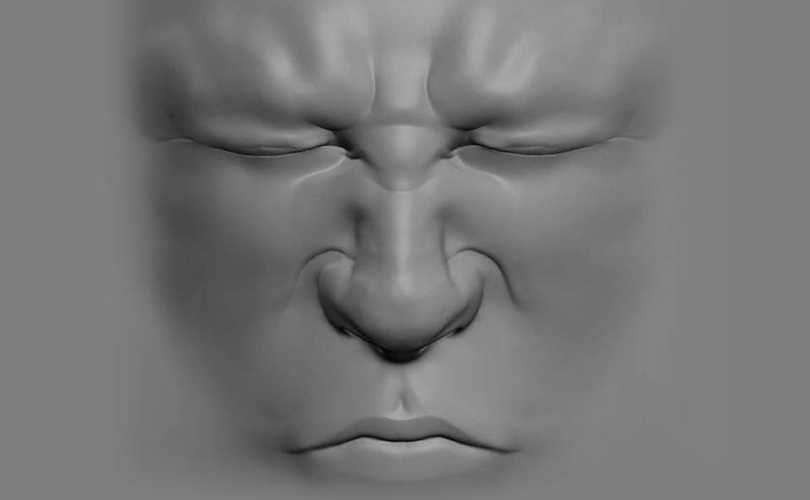

Researchers at UC San Diego have developed a computer vision algorithm that can assess pain levels by analyzing the patient’s facial expressions. It’s not a first, but the results of this new study are far more promising than ever before.

The literature doesn’t mention any specific gadgetry or software, but it does say that the model detection of pain (compared to no-pain models) yielded “good-to-excellent accuracy.” This applied to both ongoing and transient conditions.

What was particularly interesting was that the computer model actually managed to beat humans. Its ability to detect discomfort in the sick person was greater than that of a trained nurse. When the patient’s parents were asked to step in and determine if their kid was suffering, the computer model – to no one’s surprise – proved to be less accurate.

Considering that even the slightest error can alter a patient’s fate, this a great new step towards reducing bias in diagnosis. The vision algorithm is far from ideal and probably won’t replace live physicians any time soon, but it will serve as a great tool for confirming human assessments in the future.

The specifics can be found in the original report (Automated Assessment of Children’s Postoperative Pain Using Computer Vision) with a side of patent jargon.

Leave a comment